JARVIS 2.0 — Building an Autonomous Multi-Agent AI System

How to Choose the Right Developer for Your Startup in India

JARVIS v1 was one file, one LLM call, one if-elif chain. It worked for a demo. JARVIS 2.0 is a fundamentally different architecture: a supervisor agent that understands intent and routes to specialized sub-agents — a research agent, a code agent, a writing agent, a tool-use agent. This is the architecture that eventually shaped the production AI system I built at Idyllic Services.

Why Single-Agent AI Has a Ceiling

The limitation of a single-agent system becomes clear quickly. You have one LLM handling everything: research, reasoning, code execution, writing, API calls. The prompt gets massive. The LLM has to maintain context about every possible capability. Response quality degrades as the task becomes more complex.

The human analogy: you wouldn't want one person to simultaneously be your researcher, your developer, your writer, and your project manager. You'd have specialists who each do one thing well, coordinated by a manager who understands the overall goal.

That's multi-agent AI. A supervisor that understands intent. Specialized agents that execute.

The Architecture

User Query

│

▼

┌─────────────────────────────────────┐

│ Supervisor Agent │

│ - Understands user intent │

│ - Decomposes complex tasks │

│ - Routes to appropriate sub-agents │

│ - Synthesizes final response │

└──────┬─────────┬──────────┬─────────┘

│ │ │

▼ ▼ ▼

┌──────────┐ ┌────────┐ ┌──────────┐

│ Research │ │ Code │ │ Writing │

│ Agent │ │ Agent │ │ Agent │

│ │ │ │ │ │

│ Web search│ │ Execute│ │ Draft, │

│ RAG query │ │ Python │ │ Format, │

│ Summarize │ │ Debug │ │ Review │

└──────────┘ └────────┘ └──────────┘The Supervisor Agent

The supervisor is the brain. It receives the user's request, understands what's needed, and decides which sub-agents to activate — and in what order.

class SupervisorAgent:

def __init__(self):

self.agents = {

"research": ResearchAgent(),

"code": CodeAgent(),

"writing": WritingAgent(),

}

self.llm = LLMClient(model="claude-3-5-sonnet")

def process(self, user_query: str) -> str:

# Step 1: Understand intent and plan

plan = self.llm.complete(

system="You are a supervisor AI. Analyze the user's request and create a step-by-step plan. For each step, specify which agent to use: research, code, or writing.",

user=user_query,

response_format="json"

)

# Step 2: Execute plan

context = {"original_query": user_query}

for step in plan["steps"]:

agent = self.agents[step["agent"]]

result = agent.execute(step["task"], context)

context[step["output_key"]] = result

# Step 3: Synthesize final response

return self.llm.complete(

system="Synthesize the work done by the sub-agents into a coherent final response.",

user=str(context)

)The Research Agent

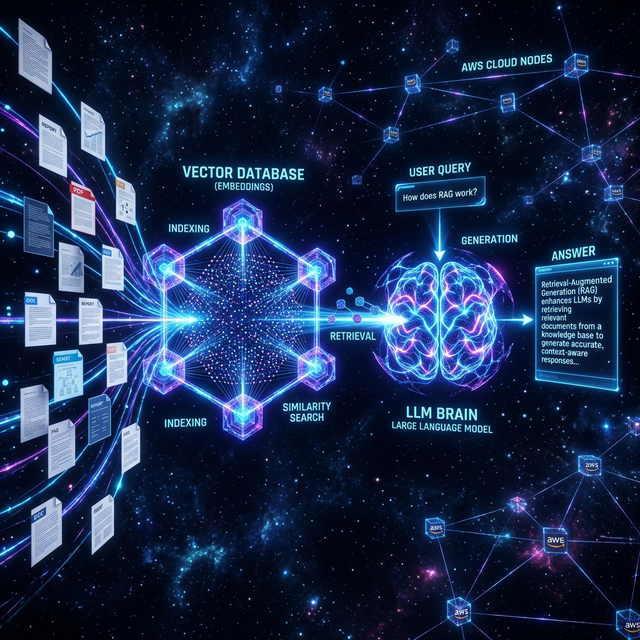

The research agent handles information gathering — web search, RAG queries against a knowledge base, document summarization:

class ResearchAgent:

def __init__(self):

self.search_tool = WebSearchTool()

self.rag = RAGRetriever()

self.llm = LLMClient()

def execute(self, task: str, context: dict) -> str:

# Decide: web search or knowledge base?

strategy = self.llm.complete(

system="Given a research task, decide: use web_search or knowledge_base. Return JSON.",

user=task

)

if strategy["tool"] == "web_search":

results = self.search_tool.search(task)

else:

results = self.rag.retrieve(task, top_k=3)

# Summarize results relevant to the task

return self.llm.complete(

system="Summarize these search results to answer the specific task.",

user=f"Task: {task}\n\nResults: {results}"

)The Code Agent

The code agent can write and execute Python — real code execution in a sandboxed environment:

class CodeAgent:

def execute(self, task: str, context: dict) -> str:

# Generate code

code = self.llm.complete(

system="Write Python code to accomplish the task. Output only valid Python.",

user=f"Task: {task}\nContext: {context}"

)

# Execute in sandbox

try:

result = self.sandbox.run(code, timeout=30)

return {"code": code, "result": result, "success": True}

except Exception as e:

# Self-correction loop

fixed_code = self.llm.complete(

system="Fix the Python code error.",

user=f"Code:\n{code}\n\nError:\n{str(e)}"

)

result = self.sandbox.run(fixed_code, timeout=30)

return {"code": fixed_code, "result": result, "success": True}How This Architecture Became Production

The multi-agent architecture I designed for JARVIS 2.0 directly informed the supervisor agent pattern I implemented in the agentic AI pipeline at Idyllic Services. The core insight — a smart supervisor routing to specialized agents instead of one monolithic prompt handling everything — is what enabled the ~99% token reduction and ~10× throughput improvement.

Instead of one massive prompt with all possible instructions, each agent receives only the instructions relevant to its specific task. Smaller prompts. Faster responses. Lower cost. Better results.

Key Lessons from Building Multi-Agent Systems

- The supervisor is everything. A bad supervisor produces incoherent multi-agent work. Invest in the routing and synthesis logic.

- Agents should be narrow. The more specific an agent's responsibility, the better it performs. A "code agent" that also does research is worse than two specialized agents.

- Context passing is the hard part. How agents share information determines the quality of the final synthesis. Design your context schema carefully.

- Always have a fallback. When an agent fails, the supervisor needs to know — and have a recovery path — rather than silently producing broken output.

Building an agentic AI system or multi-agent pipeline? This is where I live. Let's talk architecture.

Get In Touch