Uncertainty-Aware Medical AI: Our IEEE 2026 Bayesian Pneumonia Detection Paper

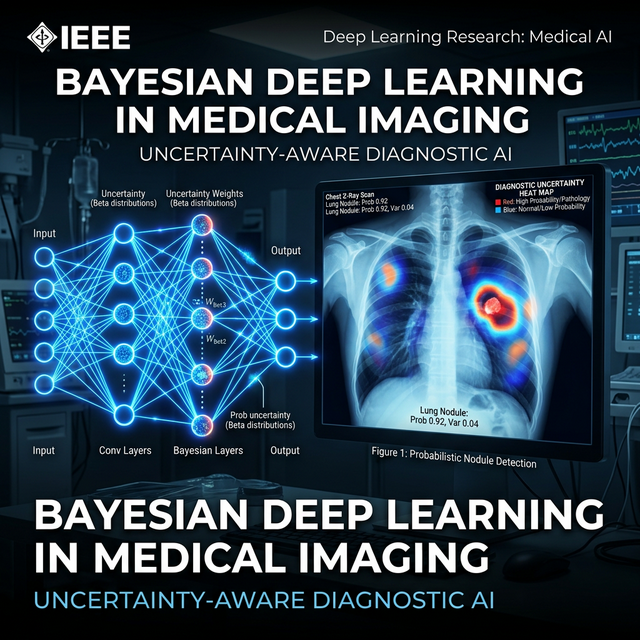

Standard CNNs tell you what they think — "this X-ray shows pneumonia." Bayesian deep learning tells you how confident it is. That difference is the entire motivation behind our IEEE 2026 paper.

Why Medical AI Needs to Know What It Doesn't Know

A standard deep learning model for pneumonia detection can achieve very high accuracy on test sets. But it has a dangerous failure mode: it gives you answers with equal confidence regardless of whether the input is a clear-cut case or an ambiguous, edge case X-ray. A wrong confident answer in clinical care is worse than saying "I'm not sure."

This is the problem our research addressed: can we build a neural network that not only classifies chest X-rays but also quantifies its own uncertainty — so clinicians know when to trust the model and when to get a second opinion?

The Bayesian Approach: MC Dropout

We used Monte Carlo Dropout as our approximation of Bayesian inference. The concept is elegant in its simplicity:

- Normally, dropout layers are disabled during inference (test time)

- In MC Dropout, you keep dropout active during inference

- Run the same input through the network N times (we used N=50)

- Get N different predictions (each is a "sample" from the posterior distribution)

- The mean of predictions = the model's best estimate

- The variance/entropy of predictions = the model's uncertainty

# During inference — keep dropout active

model.train() # PyTorch: keeps dropout active

predictions = []

for _ in range(50): # N stochastic passes

with torch.no_grad():

pred = model(x_ray_tensor)

predictions.append(torch.softmax(pred, dim=1))

# Stack and analyze

preds = torch.stack(predictions)

mean_pred = preds.mean(0) # Confidence

uncertainty = preds.var(0).mean() # Uncertainty estimateResults and Clinical Relevance

Our model achieved competitive classification performance (AUC > 0.93) while providing a calibrated uncertainty score. The key finding: cases with high uncertainty correlated strongly with cases where radiologists themselves disagreed on the diagnosis. The model had learned, implicitly, what was hard.

"An AI that knows what it doesn't know is far more valuable in clinical settings than one that is always confident."

What Made This Research-Grade Work

This wasn't a Kaggle competition. Getting to IEEE publication quality required:

- Rigorous calibration testing — reliability diagrams showing predicted vs. actual confidence

- Comparison against baselines including standard CNNs and ensemble methods

- Clinical validation of uncertainty scores against radiologist agreement data

- Statistical significance testing on all performance numbers

It took months of iterations to get the uncertainty quantification reliable enough to write up confidently.

Interested in AI for medical imaging, uncertainty quantification, or Bayesian methods? Let's connect.

Get In Touch