When Junior College Vector Math Saved My Real-World AI Project

How 11th & 12th Grade Math Became the Cornerstone of My AI Career

In 11th grade, I struggled with vectors. The teacher showed us dot products, cross products, and cosine of angles between vectors. I passed the exam by memorizing formulas I didn't fully understand. Six years later, those exact formulas are at the core of the AI system that achieved ~99% LLM token reduction in production. Math class turned out to matter.

The Problem That Brought Me Back to 11th Grade

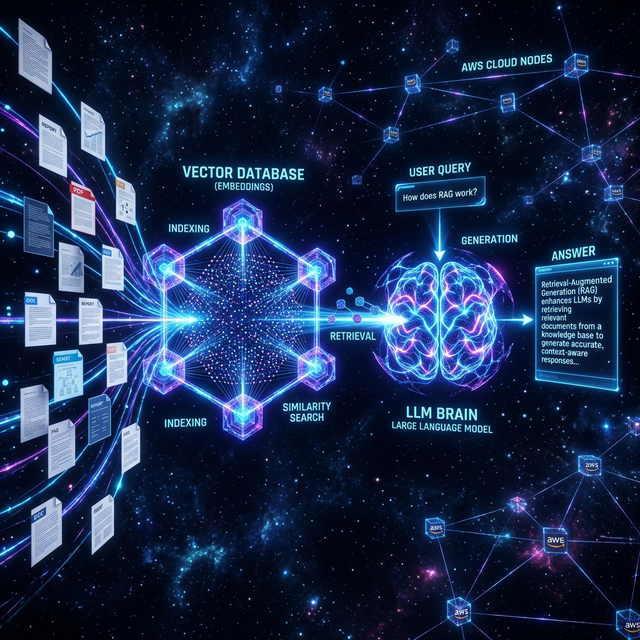

At Idyllic Services, I was building a RAG (Retrieval-Augmented Generation) pipeline. The core challenge: given a user's query, find the most relevant documents from a knowledge base of thousands, then pass only those relevant documents to the LLM instead of the entire knowledge base.

This is fundamentally a search problem. But not keyword search — semantic search. The user might ask "how do I handle a refund?" and the relevant document might say "customer reimbursement process" — no keyword overlap, but same meaning.

The solution: vector embeddings and similarity search on AWS OpenSearch.

What Vector Embeddings Actually Are

An embedding model takes text and converts it to a high-dimensional vector — a list of numbers, typically 768 or 1536 dimensions. The magic: semantically similar text ends up in similar regions of this vector space.

"How do I handle a refund?" and "customer reimbursement process" — different words, but similar meaning, so their vectors end up close to each other in 1536-dimensional space.

from openai import OpenAI

client = OpenAI()

def embed_text(text):

response = client.embeddings.create(

model="text-embedding-3-small",

input=text

)

return response.data[0].embedding # List of 1536 floatsHere's Where 11th Grade Math Appears

To find which stored document is most similar to a query, you compare vectors. The metric used is cosine similarity — the cosine of the angle between two vectors.

Remember this formula from 11th grade?

# Cosine similarity — exactly what we learned in high school

# cos(θ) = (A · B) / (|A| × |B|)

import numpy as np

def cosine_similarity(vec_a, vec_b):

dot_product = np.dot(vec_a, vec_b) # The dot product!

magnitude_a = np.linalg.norm(vec_a)

magnitude_b = np.linalg.norm(vec_b)

return dot_product / (magnitude_a * magnitude_b)

# Similarity of 1.0 = identical direction = same meaning

# Similarity of 0.0 = perpendicular = unrelated

# Similarity of -1.0 = opposite direction = opposite meaningThat's it. The core of semantic search in production AI is the dot product formula from 11th grade physics class. Same formula. Same math. Applied to 1536-dimensional vectors instead of 3-dimensional arrows.

"The moment I recognized the cosine similarity formula in my production code, I went back and re-read my 11th grade notes. They made complete sense for the first time."

The Production Implementation on AWS OpenSearch

In practice, I didn't implement cosine similarity manually — OpenSearch (Amazon's managed Elasticsearch) handles this at scale. The workflow:

- Index documents: embed each document and store the vector in OpenSearch alongside the text

- Query: embed the user's query, then ask OpenSearch for the K most similar vectors

- Retrieve: get the top-K most relevant documents

- Generate: pass only those K documents (instead of the entire knowledge base) to the LLM

def semantic_search(query, top_k=3):

# Step 1: Embed the query

query_vector = embed_text(query)

# Step 2: Search OpenSearch

search_body = {

"size": top_k,

"query": {

"knn": {

"embedding": {

"vector": query_vector,

"k": top_k

}

}

}

}

response = opensearch_client.search(

index="knowledge-base",

body=search_body

)

# Step 3: Return matched documents

return [hit["_source"]["text"]

for hit in response["hits"]["hits"]]Why This Produced ~99% Token Reduction

Before this system, the pipeline sent the entire knowledge base to the LLM as context. Thousands of documents, thousands of tokens, expensive API calls, slow responses.

After implementing semantic search: the LLM receives only the 3 most relevant documents — 99% fewer tokens, drastically lower cost, dramatically faster responses. Same answer quality, because the right information was selected by the vector math.

The Irony and the Lesson

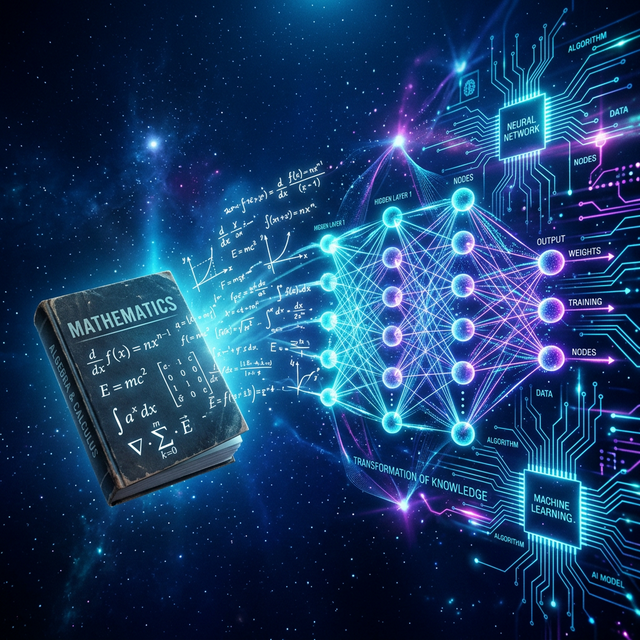

The irony is perfect: the math I struggled with in junior college — the formulas I memorized without understanding — turned out to be the literal mathematical foundation of one of the most important features in modern AI systems. RAG pipelines, semantic search, nearest-neighbor retrieval — all fundamentally linear algebra.

The lesson I take from this: foundational mathematics is not abstract. It's infrastructure. You might not see the application for years. But one day you'll be in a production system and you'll recognize the formula, and suddenly the past and present will connect in a way that feels almost magical.

Learn the fundamentals thoroughly. Not because they'll be on the exam. Because they'll be in your production code.

Want to talk about RAG pipelines, vector search, or AI architecture? Let's connect.

Get In Touch