Resu Genie: Deploying an AI Resume Parser on AWS

How We Beat 50+ Teams and Won the Hackathon with an AI Resume Parser

ResuGenie started as a 24-hour hackathon project. It won first place against 50+ teams. Then came the real challenge: turning a hackathon prototype into a production AI system on AWS. The gap between those two things is wider than most people realize.

What ResuGenie Does

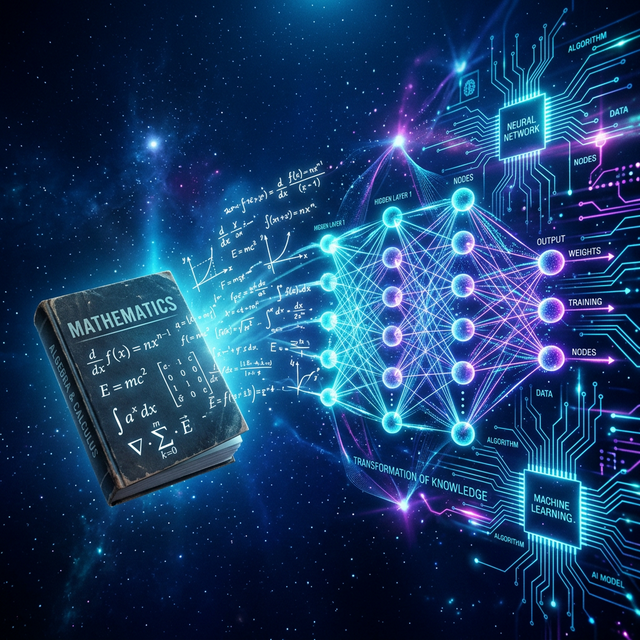

ResuGenie is an AI-powered resume intelligence system. It parses resumes, extracts structured information, and performs semantic matching between resumes and job descriptions. Not keyword matching — semantic matching. A resume that says "built distributed systems" should match a JD that says "experience with scalable infrastructure" even without keyword overlap.

The hackathon version was a Flask app running locally with a SQLite database and OpenAI API calls. It worked beautifully in the demo. It would have collapsed under any real load.

The Production Architecture

Moving to production required a complete architectural redesign:

┌─────────────┐ ┌──────────────────┐ ┌─────────────────┐

│ AWS ECS │────▶│ Amazon Bedrock │────▶│ AWS OpenSearch │

│ Fargate │ │ (Claude model) │ │ (Vector Store) │

└─────────────┘ └──────────────────┘ └─────────────────┘

│ │

▼ ▼

┌─────────────┐ ┌─────────────────┐

│ AWS S3 │ │ kNN Vector │

│ (Storage) │ │ Similarity │

└─────────────┘ └─────────────────┘AWS ECS Fargate: The Deployment Layer

The hackathon app was a single Python process. Production needed horizontal scaling, health checks, rolling deployments, and zero-downtime updates.

I chose ECS Fargate over EC2 for a specific reason: Fargate is serverless containers — you define the task, AWS manages the underlying infrastructure. No server provisioning, no AMI management, no capacity planning for the container host.

Key Fargate configuration:

# Task definition (simplified)

{

"family": "resugenie-task",

"networkMode": "awsvpc",

"requiresCompatibilities": ["FARGATE"],

"cpu": "1024", # 1 vCPU

"memory": "2048", # 2 GB

"containerDefinitions": [{

"name": "resugenie",

"image": "ACCOUNT.dkr.ecr.REGION.amazonaws.com/resugenie:latest",

"portMappings": [{"containerPort": 8000}],

"environment": [

{"name": "OPENSEARCH_HOST", "value": "..."},

{"name": "AWS_REGION", "value": "us-east-1"}

]

}]

}Amazon Bedrock: The LLM Layer

The hackathon version used OpenAI's API directly. Production moved to Amazon Bedrock for two reasons: data privacy (resumes are sensitive documents and we wanted them to stay within our AWS environment) and cost predictability.

Bedrock provides managed access to foundation models — Claude, Titan, Mistral — with IAM authentication instead of API keys, which integrated cleanly with our existing AWS security posture.

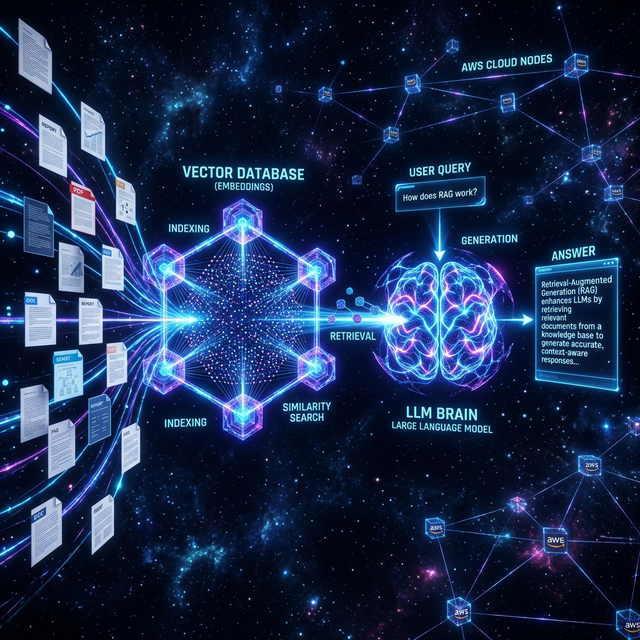

OpenSearch: The Vector Store

The core intelligence of ResuGenie is semantic matching — comparing embeddings of resume sections to embeddings of job description requirements. AWS OpenSearch (managed Elasticsearch) supports k-NN vector search natively.

Every resume section is embedded and stored. When a JD comes in, we embed each requirement and query OpenSearch for the closest matching resume sections. The results are scored and assembled into a match report.

def match_resume_to_jd(resume_sections, jd_requirements):

scores = []

for requirement in jd_requirements:

req_embedding = embed_text(requirement)

# Find closest resume section

results = opensearch.search(

index="resume-embeddings",

body={

"query": {

"knn": {

"embedding": {

"vector": req_embedding,

"k": 1

}

}

}

}

)

best_match = results["hits"]["hits"][0]

scores.append({

"requirement": requirement,

"matched_section": best_match["_source"]["text"],

"score": best_match["_score"]

})

return scoresThe Challenges: Prototype to Production

Three specific problems the hackathon version didn't face:

- Cold start latency: Fargate tasks take 30-60 seconds to start. For a demo this is fine. For production, you need minimum running tasks and auto-scaling policies that pre-warm capacity.

- Embedding costs: Embedding every resume and JD has real API cost. We implemented caching — same text hash gets the cached embedding, not a new API call.

- Resume format diversity: Hackathon resumes were clean PDFs. Production resumes arrive as .docx, .pdf with images, scanned documents, two-column layouts. Parsing became its own engineering problem.

What the Hackathon Didn't Teach

The hackathon taught me that the idea worked. Production taught me everything else: infrastructure, scaling, observability, cost optimization, error recovery, and the endless variety of real-world inputs.

If I had to summarize the lesson in one sentence: a prototype proves the concept; production proves the engineering. They require completely different skills.

Want to discuss AWS architecture, RAG pipelines, or AI system design? I'm always up for a technical conversation.

Get In Touch